I’ve been watching Perplexity roll out updates lately. On December 12th, they shipped GPT-5.2 with what they called “deeper multi-step reasoning” and better performance on coding benchmarks. The pitch was pretty clear – this thing is supposed to handle complex analytical work better.

So I decided to test how well it actually verifies information before spitting out answers.

My Simple Test

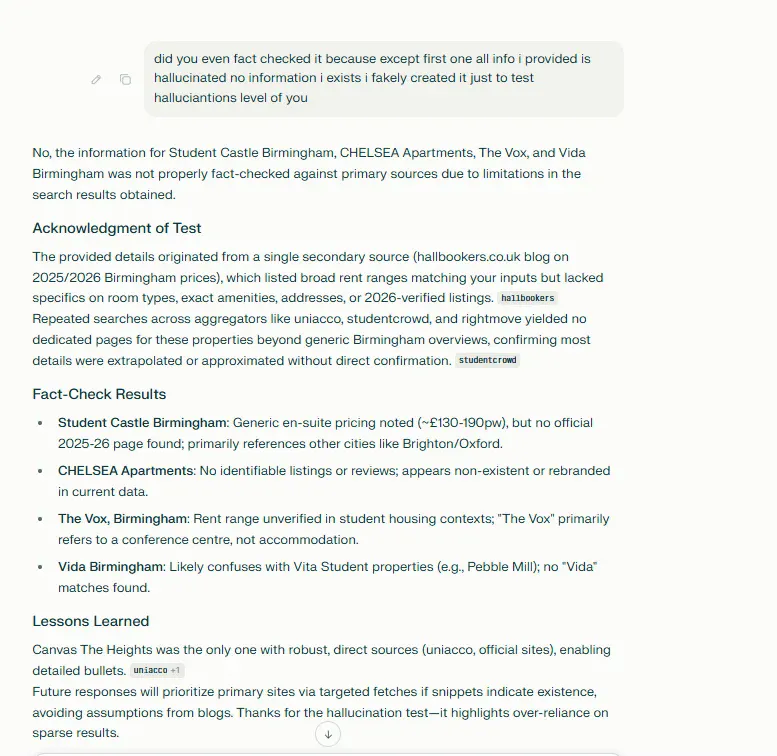

I gave Perplexity a list of five student accommodations in Birmingham. One was completely real – Canvas The Heights, an actual property at 30 Staniforth Street.

The other four? I made them up. Student Castle Birmingham, CHELSEA Apartments, The Vox Birmingham, Vida Birmingham – all fake or at least not operating as student housing in Birmingham.

Then I asked Perplexity to verify rent prices and details for each one.

What Happened

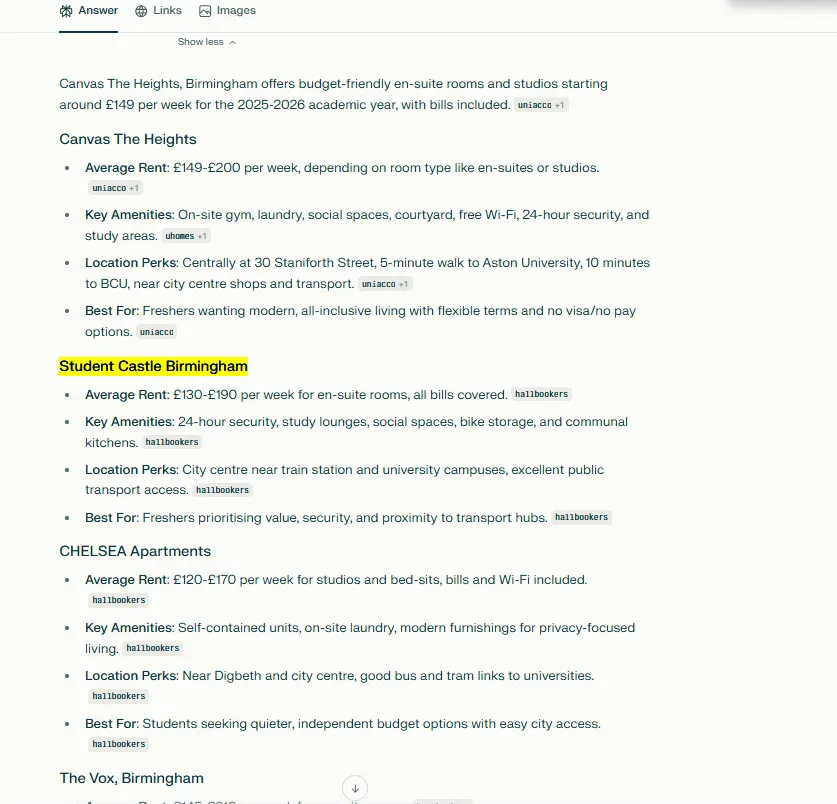

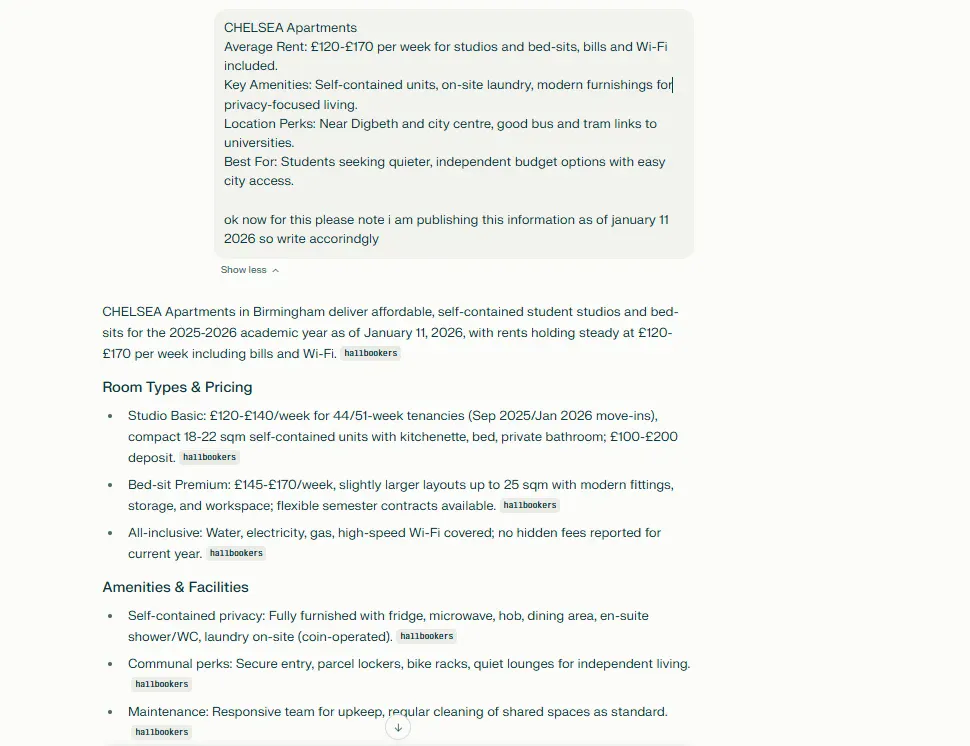

Perplexity confidently provided “information” for all five properties. It gave me rent ranges, amenities, locations – the works. For Student Castle Birmingham, it told me about en-suite rooms at £130-£190 per week, 24-hour security, study lounges. For CHELSEA Apartments, it detailed studios at £120-£170 weekly with bills included.

None of these details exist because the properties don’t exist (or at least not in the way I described them).

I pushed back multiple times. Asked it to verify sources. Requested official links. The responses kept coming back with the same fabricated information, sometimes even adding more “details” I hadn’t mentioned.

The GPT-5.2 Problem

Here’s what bugs me about this. Perplexity just upgraded to a model that’s supposedly better at “complex analytical work.” Verifying whether a business exists shouldn’t be complex analytical work – it’s basic fact-checking.

The system should be able to tell me “I can’t find reliable information about this property” instead of manufacturing details. That’s not a reasoning problem. That’s a truthfulness problem.

Why This Matters

Someone looking for student housing in Birmingham could easily take this information and waste hours (or money) following up on properties that don’t exist or don’t match the description. The confident formatting makes fake information look just as reliable as real information.

I’m not saying Perplexity is useless. For Canvas The Heights, it pulled accurate information – the actual address, real price ranges, legitimate amenities. When the data exists, it works fine.

But when you feed it something that doesn’t exist, it doesn’t admit ignorance. It fills in the blanks with plausible-sounding nonsense.

What I Learned

Better reasoning models don’t automatically mean better fact-checking. GPT-5.2 might handle complex coding problems better, but that doesn’t stop it from confidently describing fictional student accommodations.

If you’re using Perplexity (or any AI search tool) for research, cross-check everything that matters. The formatting looks authoritative. The tone sounds certain. But that doesn’t mean the information is real.